Milla Jovovich Develops MemPalace: The Ultimate AI Memory System

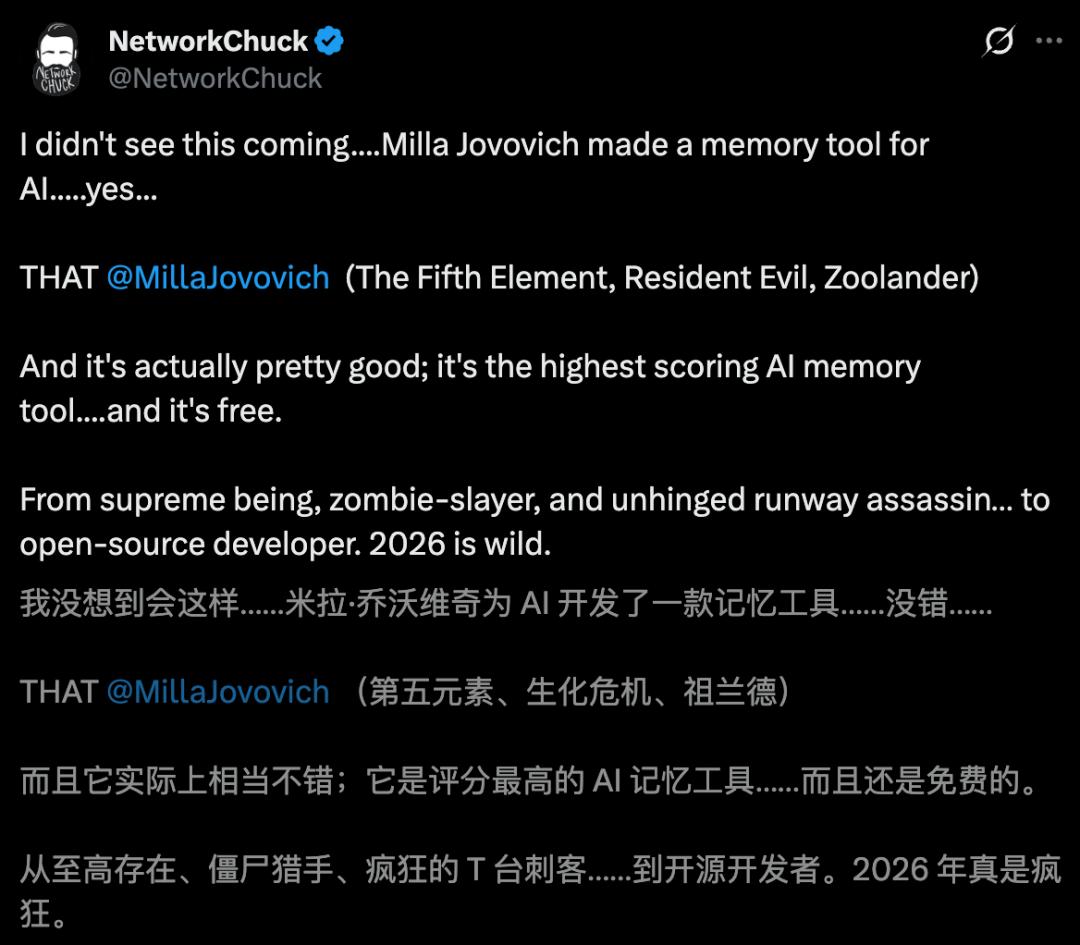

Recently, the internet has been buzzing about an open-source AI memory system called MemPalace, which is touted as the strongest memory AI globally.

To everyone’s surprise, one of the core developers behind this project is none other than Hollywood star Milla Jovovich, known for her roles in “The Fifth Element” and “Resident Evil.”

After finishing her work on set and attending fashion shows, she dedicates her nights to coding.

Together with her engineer friend Ben Sigman, they collaborated with Claude to open-source this star-studded project.

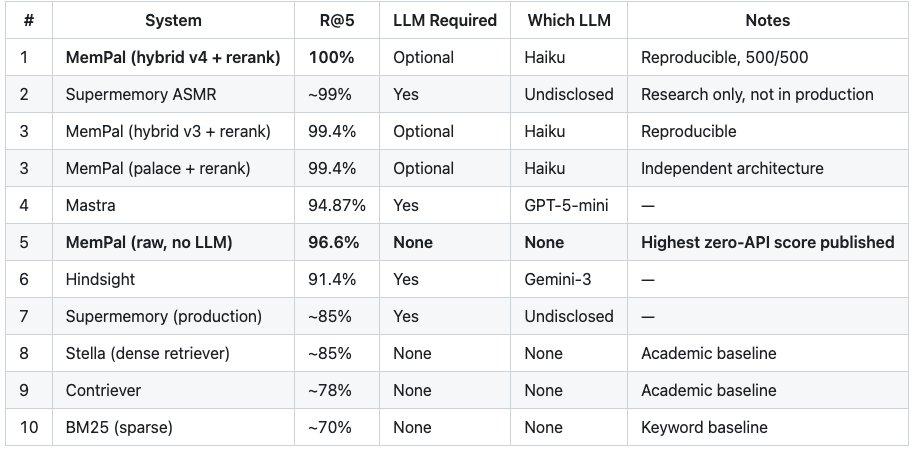

MemPalace achieved an unprecedented score of 500 out of 500 in the LongMemEval benchmark, recognized as the most rigorous long-term memory evaluation, earning the first perfect score globally.

As of now, MemPalace has garnered 17.9k stars and over 2k forks on GitHub.

GitHub link:

https://github.com/milla-jovovich/mempalace

The Birth of MemPalace

The creation of MemPalace was somewhat serendipitous. Six months ago, Ben Sigman introduced Milla to Claude Code. As a passionate writer, she quickly realized that Claude could transform her imaginative ideas into functioning code.

However, during the development of a large game, she encountered an “invisible wall.” Milla discovered that while AI is powerful, it lacks “soul” and “accumulation.” AI can only master what has been done before. The true creation of unique and distinct content comes from the human user.

Without our imagination and insatiable curiosity, AI is merely a search engine.

This realization was not just theoretical; it was a specific pain point she faced during development. Every time she started a new conversation with the AI, previous discussions, rejected designs, and failed ideas were all reset.

Milla keenly recognized that solving the issue of AI’s long-term memory was even more crucial than the game project itself. She and Ben decided to pivot and turn this “roadblock” into an independent project.

Milla took on the role of “architect” to reshape the logic, while Ben implemented the blueprint in code. After six months of collaboration, they officially launched the system named MemPalace.

What is MemPalace?

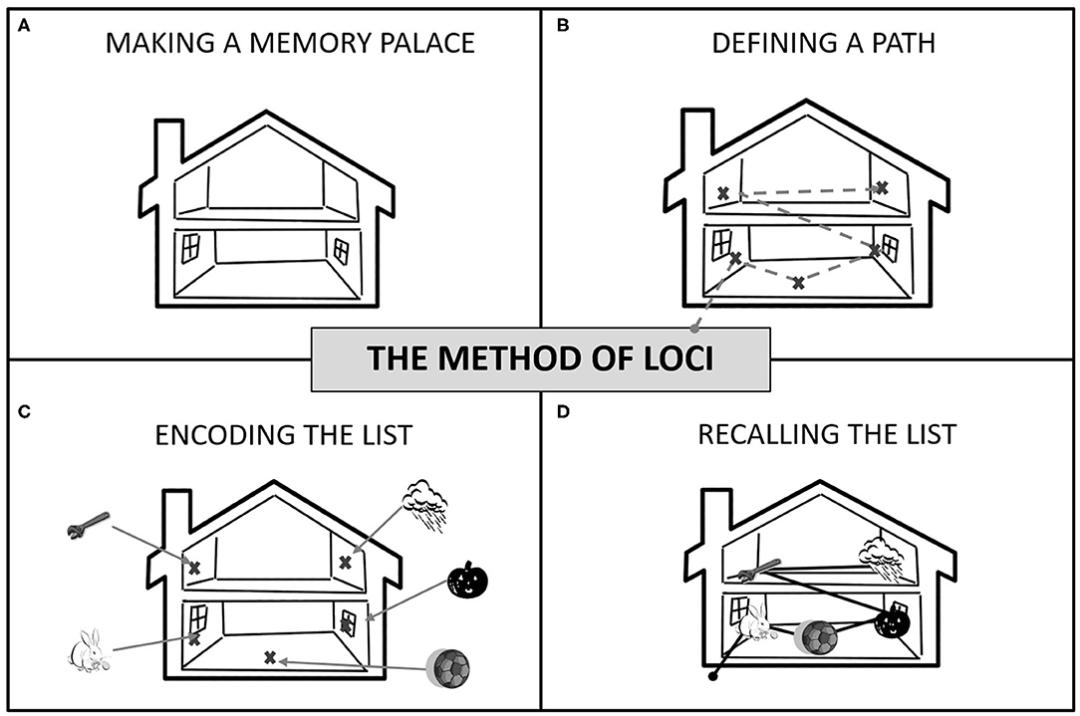

The name MemPalace is inspired by ancient Greek techniques. Two thousand years ago, orators in ancient Greece used a method called the “Method of Loci” to memorize long speeches—placing each segment of content in different rooms, allowing them to mentally walk through the palace to retrieve the information.

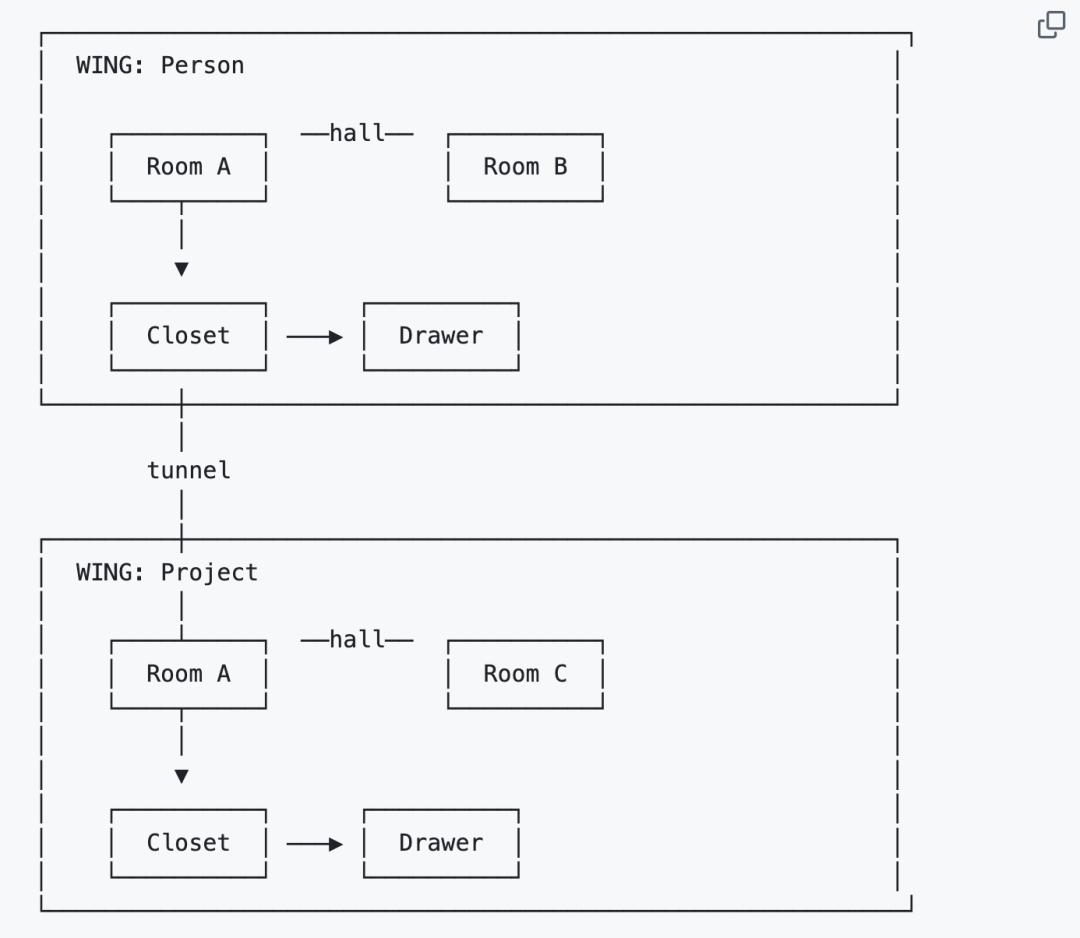

MemPalace borrows from this technique, structuring data and creating a virtual space: each project, person, and topic is a “wing” in the palace.

Within each wing are “rooms” categorized by themes: one for authentication systems, another for database selection, deployment processes, and more. The rooms are connected by “halls” categorized by memory types: decisions, milestones, preferences, suggestions, and discoveries, with five fixed pathways.

The system automatically generates “tunnels” between rooms with the same name across different wings. For example, if “Kai” has a room for “auth migration” and “Driftwood” has the same, the tunnel connects them, instantly linking memories of the same topic from different perspectives.

Each room includes a “closet” storing summary indexes, while the “drawers” within contain the full original conversations, unaltered.

During searches, the AI does not need to sift through all data. It first locates the wing, enters the room, and opens the drawer—narrowing the search from the entire database to precise hits.

In tests on over 22,000 real conversation memories, the overall search recall rate was 60.9%. After filtering through wings and rooms, it jumped to 94.8%, a 34-point increase.

In other words, the structure itself enhances retrieval capabilities. Moreover, all data is stored locally in ChromaDB, avoiding API calls, cloud usage, and costs.

Affordable Memory

For a mere $0.7 a year, users can remember everything. Milla’s algorithm estimates that a heavy AI user could accumulate about 19.5 million tokens of conversation history over six months. If the large model only summarizes, it would cost approximately $507 a year, risking the loss of critical reasoning processes.

With MemPalace, each AI startup only loads 170 tokens of key facts—your team, projects, preferences—retrieved only when necessary.

AAAK: A Note-Taking Method for AI

MemPalace also features an impressive design called AAAK, a compressed dialect specifically written for AI rather than humans. For example, a 1000-token English passage can be compressed into approximately 120 tokens using AAAK, maintaining information integrity while reducing tokens by eight times.

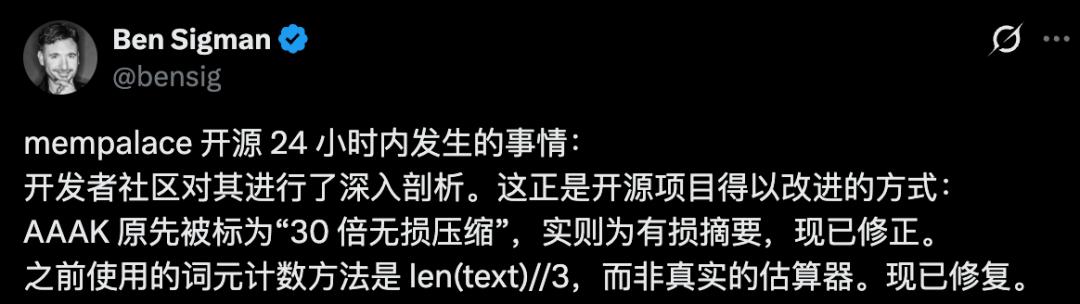

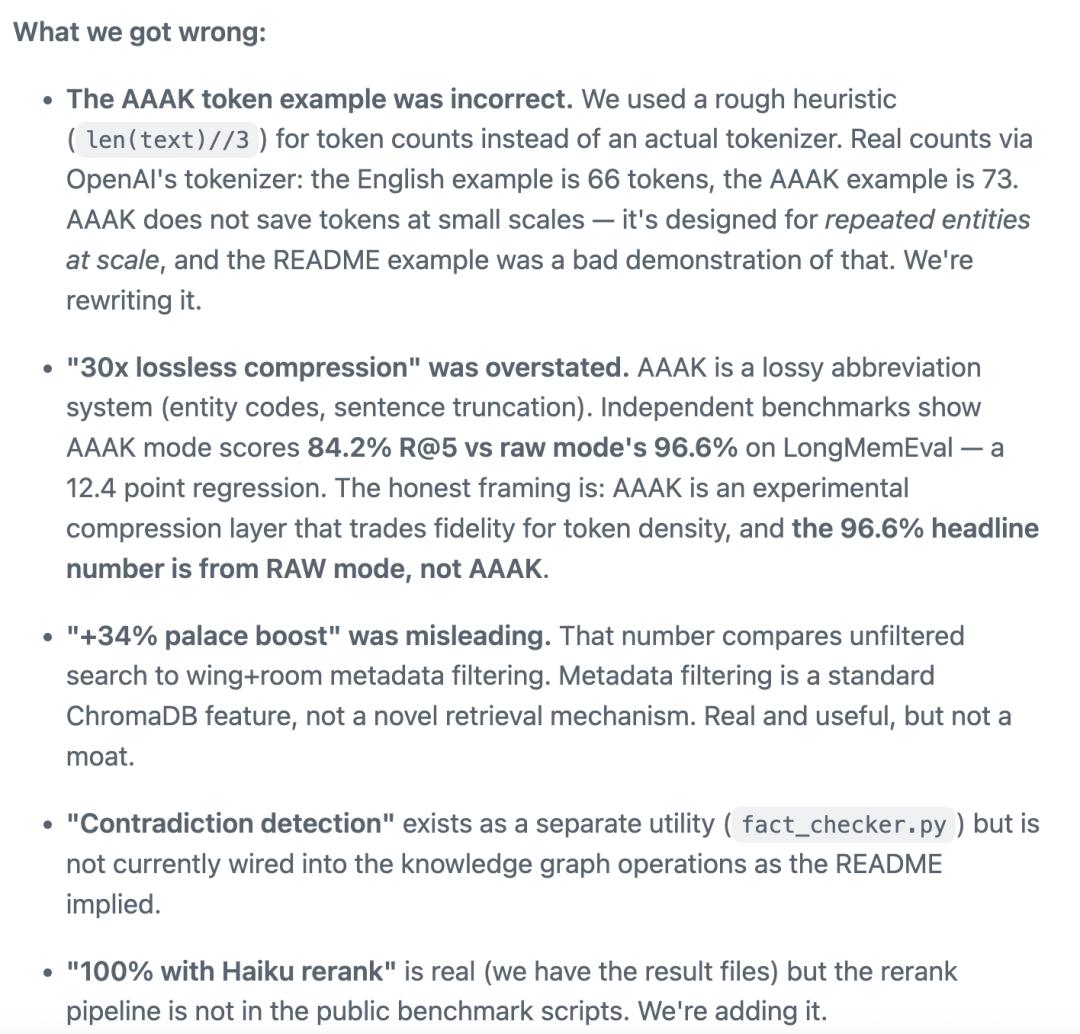

Community Scrutiny

However, the story doesn’t end there. Within 48 hours of MemPalace’s launch, the open-source community thoroughly examined the project. The first critique targeted AAAK, which the official claims could achieve “30 times lossless compression.”

The community found that the examples in the project did not actually save tokens—an English original of 66 tokens became 73 after AAAK encoding. Furthermore, AAAK was lossy, not lossless. In LongMemEval, the AAAK mode scored only 84.2%, lower than the 96.6% of the raw mode by 12.4 percentage points.

The second critique focused on the claimed “+34% palace gain.” This figure compared direct searches without filtering to searches using wings and rooms for metadata filtering. Metadata filtering is a standard feature of ChromaDB, not unique to MemPalace.

The third critique addressed contradiction detection. The project suggested that the knowledge graph would automatically verify facts, but in reality, the fact_checker.py was a standalone script and not integrated into the knowledge graph workflow.

Milla and Ben then did something rare in the open-source community. They did not delete comments or argue; instead, they posted an open letter at the top of the project, acknowledging each error.

They admitted that AAAK’s token example used a rough heuristic algorithm and did not run a true tokenizer. The claim of “30 times lossless compression” was rephrased to “lossy abbreviation system,” and the “+34% palace gain” was clarified as misleading wording, with an explanation that it was standard metadata filtering.

Regarding contradiction detection, they acknowledged the lack of integration and listed the issue numbers for fixes. The final line of the open letter stated, “We would rather be correct than appear impressive.”

The community’s reaction was interesting. After the critiques, more people began to seriously evaluate the project—the 96.6% score in the raw mode was genuine, and the local free version was also real.

The scrutiny did not kill MemPalace but instead provided a free trust audit.

Getting Started with MemPalace

pip install mempalace- Set up your world — who you work with, what your projects are:

mempalace init ~/projects/myapp - Mine your data:

mempalace mine ~/projects/myapp# projects — code, docs, notes

mempalace mine ~/chats/ --mode convos# convos — Claude, ChatGPT, Slack exports

mempalace mine ~/chats/ --mode convos --extract general# general — classifies into decisions, milestones, problems - Search anything you’ve ever discussed:

mempalace search "why did we switch to GraphQL" - Your AI remembers:

mempalace status

To connect tools like Claude, ChatGPT, or Cursor that support MCP, just one command is needed:

claude mcp add mempalace -- python -m mempalace.mcp_server

After that, 19 tools are connected, and the AI will call them automatically. You will no longer need to manually type mempalace search.

What’s most remarkable about this project isn’t just the perfect score or the 30 times compression ratio. It’s a reminder that the boundaries of “developer” in the AI era are disappearing.

A star known for “The Fifth Element” and “Resident Evil” and an engineer friend, using Claude, have achieved what major companies have been striving for over the past year.

The key is still the open-source, free, locally running version. Ben’s latest post even made a pun: MemPalace -> Multipass.

Those familiar with “The Fifth Element” know that’s Leeloo’s most iconic line, “Multi-pass.”

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.