AI skills implementation is not without its challenges. The transition from a universal wish pool to precise tools is built on countless lessons learned. This article reveals three typical pitfalls encountered in ten practical AI Skill implementations: violating the single responsibility principle, overestimating private data retrieval capabilities, and blindly trusting AI’s understanding of business nuances. Each experience points to the same truth—AI is not magic; it requires careful input design and task breakdown.

Initially, I intended to share practical cases of encapsulating product methodologies into AI Skills. However, after implementing nearly ten Skills in my business, I found a more relevant topic to discuss—the limitations of AI.

I realized that using AI as a tool and treating it as a “universal wish pool” are entirely different matters. In this hype surrounding large models, I too have stumbled into many pitfalls.

Today, instead of discussing lofty success stories, I will talk about three specific pitfalls I encountered after developing nearly ten product-supporting Skills. I hope to provide some genuine insights for those trying to integrate AI into their daily work.

First Pitfall: Ignoring AI’s “Single Responsibility Principle”

In my previous article “How to Evaluate Workload with Skills”, I encountered this pitfall. I expected one Skill to help me output a complete product plan while simultaneously assessing the corresponding development workload. The result? It did neither task well, producing a vague plan and an absurd evaluation.

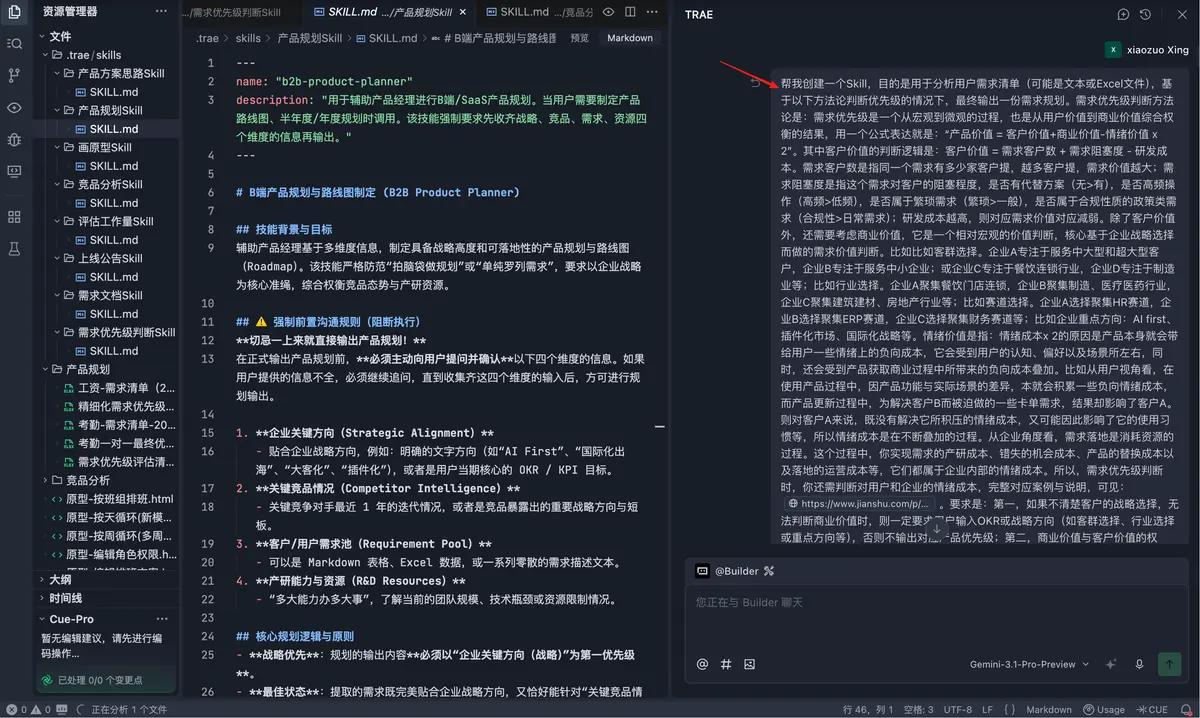

Yesterday, while writing the “Product Planning Skill”, I made the same mistake. I wanted one Skill to analyze the iteration status of key competitors over the past two years, evaluate my backlog of nearly a thousand requirements, and finally, based on our company’s strategic direction, provide a perfect “annual product plan”.

The outcome was a mess.

The solution? Break it down. I diligently split it into three independent Skills:

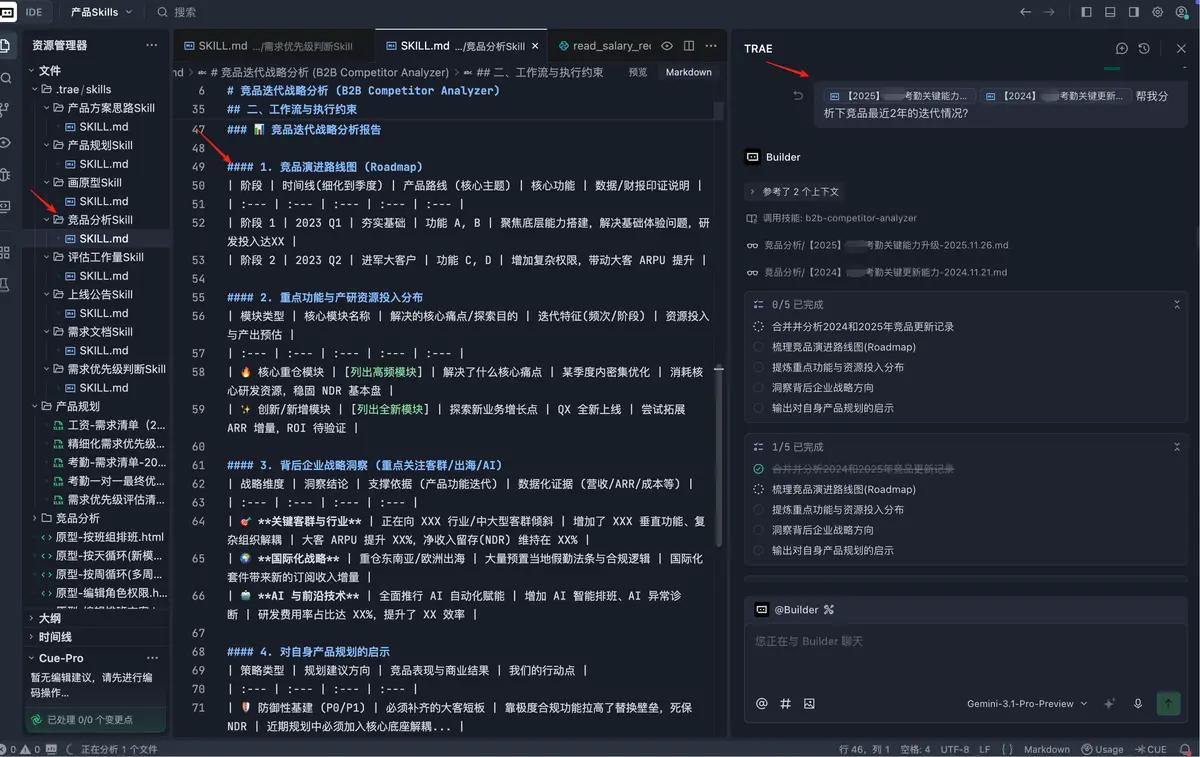

- Competitor Analysis Skill: Responsible only for analyzing the competitor logs I provided, outputting a roadmap and differentiation strategy.

- Requirement Prioritization Skill: Solely responsible for scoring my thousand requirements according to my methodology (P0-P4) and outputting a prioritized list.

- Product Planning Skill: Finally, using the results from the first two Skills, combined with corporate strategy, to output the final product planning ideas and roadmap.

In summary: This is the most common and easily overlooked pitfall. Essentially, it reflects our unrealistic expectations of AI. We often believe it is powerful enough to understand all complex demands with a simple command.

It’s like telling a newly hired graduate: “Go review the competitors and our requirement pool, and give me a product plan for next year by afternoon."—you will end up with an unimplementable PPT.

Second Pitfall: Overestimating AI’s Ability to Retrieve “Privatized Data”

While conducting competitor analysis, my initial thought was grand: I just needed to input the competitor names (like Beisen, Xinshe, Feishu, etc.), and AI would automatically fetch their upgrade logs and financial reports from the past 1-2 years, providing me with a product roadmap and strategic changes.

It did output a lengthy document, but upon closer inspection, it was mostly a patchwork of publicly available marketing materials, release notes, and even PR statements. It offered almost no valuable insights into how the products had actually evolved.

If I had thought it through, I would have avoided this unrealistic expectation.

The reason is simple: for SaaS companies, the real “user manuals” and “upgrade logs” are core updates that require login authentication to access, as they are proprietary assets. No matter how advanced AI is, it cannot breach this physical barrier.

The solution: Return to the “external brain” concept. I changed my approach and fed AI with the manually compiled “competitor upgrade logs” as raw material. I instructed it: “Based on these real iteration logs, help me analyze their evolution roadmap and insights for us.”

This time, the results met my expectations.

In summary: This pitfall fundamentally stems from a lack of context. You can think of large models as world-renowned chefs. However, if you don’t provide them with your family’s secret recipe and specific ingredients, they cannot create a dish that matches your taste.

Do not have unreasonable expectations of AI, thinking that a simple prompt will yield effortless results.

Third Pitfall: Over-reliance on AI’s Ability to Infer and Understand Business Needs

In the past year and a half, I have accumulated over 1000 requirements across two subsystems I manage. Previously, I spent half an hour each week manually identifying which module each requirement belonged to (e.g., scheduling - cyclic scheduling - odd/even weeks).

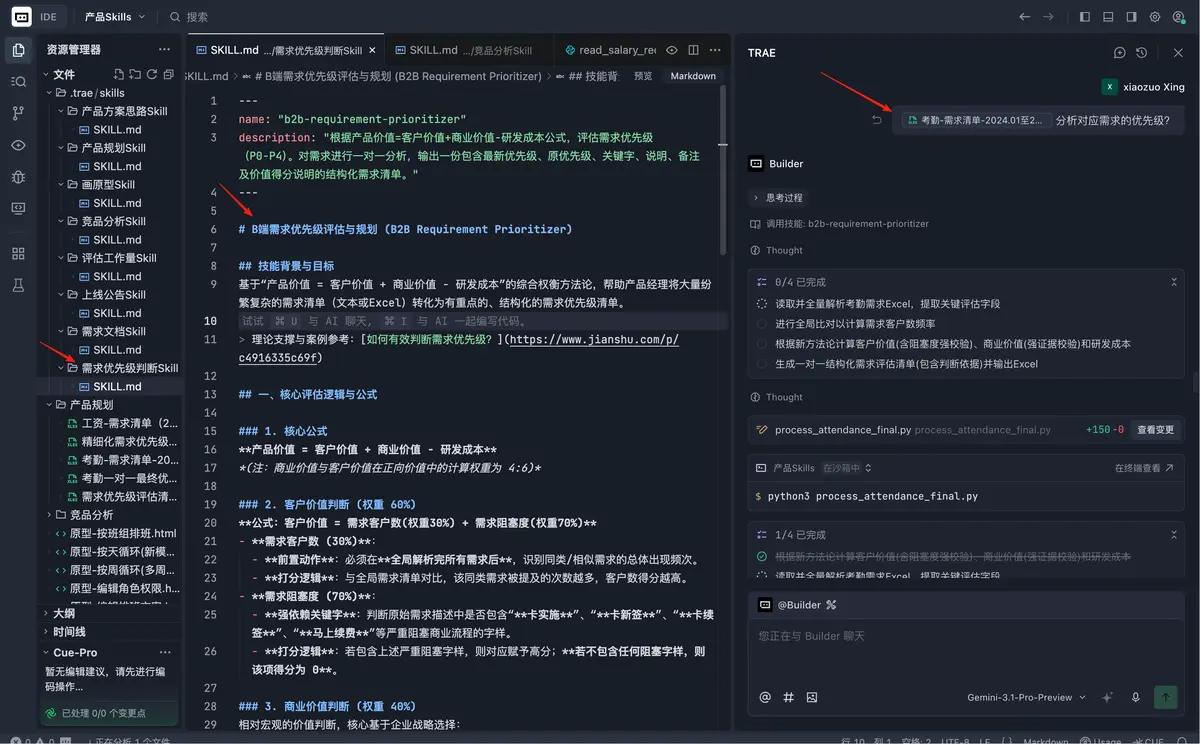

Initially, I planned to create a Skill to process these 1000 requirements. I wanted it to not only merge similar requirements (e.g., combine five cyclic scheduling requests into one) but also apply my prioritization methodology (Product Value = Customer Value + Business Value – Emotional Cost x2) to output a refined high-priority requirements list.

The result? Reality hit me hard again. I overestimated AI’s understanding of our specific business and the clarity of the requirements provided by the business side. AI could not accurately “merge similar items” as I expected; its merging results were mostly baseless and riddled with logical flaws.

The final compromise and pragmatism: After several adjustments, I gave up on the fantasy of having it “deeply understand and merge” requirements. I opted for a more pragmatic approach: I only required it to assess priorities without merging.

I input the Excel sheet of 1000 requirements, and it output the same 1000 requirements, only adding priority assessments (P0-P4) according to my methodology. This way, I only needed to focus on the requirements marked as P0 and P1.

In summary: This third pitfall is essentially a combination of the first two. I made the mistake of expecting it to handle multiple tasks (merging requirements + assessing priorities) simultaneously, while also expecting it to possess business intuition without providing sufficient context.

Conclusion

After encountering these three major pitfalls, I gained a new understanding of how to use AI:

When creating Skills, it is essential to strictly adhere to the “single responsibility” principle and provide sufficient context.

However, this does not mean our workflows must be fragmented. In practice, we can combine multiple single-function Skills and utilize context as input material.

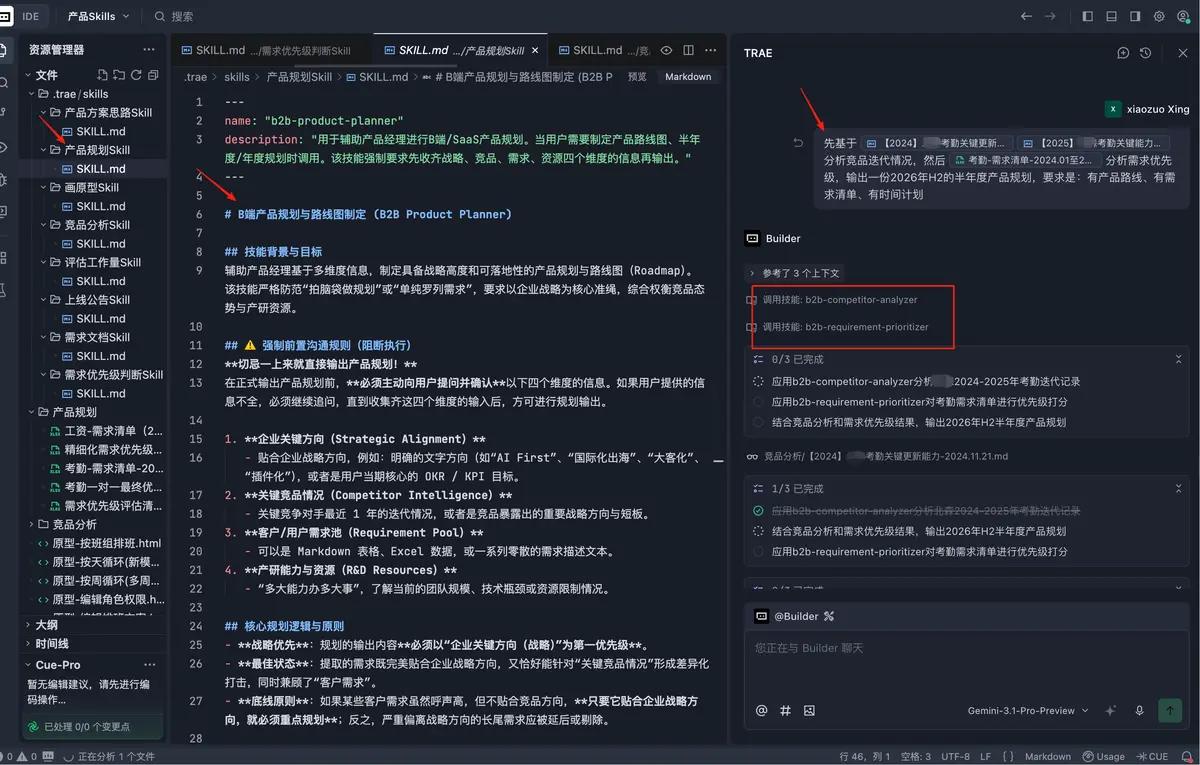

For example, when preparing the mid-year plan for 2026, my workflow now consists of:

- Providing the internally collected competitor upgrade log file and invoking the [Competitor Analysis Skill].

- Exporting the 1000 requirement list file and invoking the [Requirement Prioritization Skill].

- Automatically combining the outputs of the first two Skills, along with our company’s strategy and resource allocation, and invoking the [Product Planning Skill].

Ultimately, AI is just the chef wielding the spatula, while you are the one deciding what dish to prepare and ensuring the quality of the top-notch ingredients.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.